|

The Databricks Lakehouse Platform integrates with cloud storage and security in your cloud account, and manages and deploys cloud infrastructure on your behalf. These two new offerings are now generally available. Databricks is a unified set of tools for building, deploying, sharing, and maintaining enterprise-grade data solutions at scale. 4 Hola I recently did a course on Delta Lake by Databricks, and as much I liked going through the course it enlightened me on the various features.Delta Lake is a transaction-enabled data lake product developed by Databricks since 2016.

We use Spark Structured Streaming jobs to read data from Kafka topics and write that data into Delta Lake tables. Extracting data lineage from hand-coding is normally extremely difficult to do, but with Informatica’s new Advanced Scanners for Databricks, regardless of how different Databricks users develop – with no-code or with hand-coding – Informatica can capture complete data lineage to create transparency and trust in data assets in customers’ Databricks lakehouses. DDI provides users with services in data analysis, data engineering, data science, and AI to build an integrated lake house architecture. Streaming data from Apache Kafka into Delta Lake is an integral part of Scribd’s data platform, but has been challenging to manage and scale. Optimize your file size for fast file pruning. Here are the basic steps to success: Use Delta Tables to create your fact and dimension tables. Informatica also released new data governance capabilities to extract data lineage from hand-coded Databricks notebooks in PySpark and Spark SQL. Data Engineering with Apache Spark, Delta Lake, and Lakehouse This book & 6500+ ebooks & video courses on 1000+ technologies 60+ curated reading lists for. Just like in a traditional data warehouse, there are some simple rules of thumb to follow on Delta Lake that will significantly improve your Delta star schema joins. Informatica no-code data pipelines are easy to build, offer much faster development times, and are much easier to re-use and maintain than hand-coding.

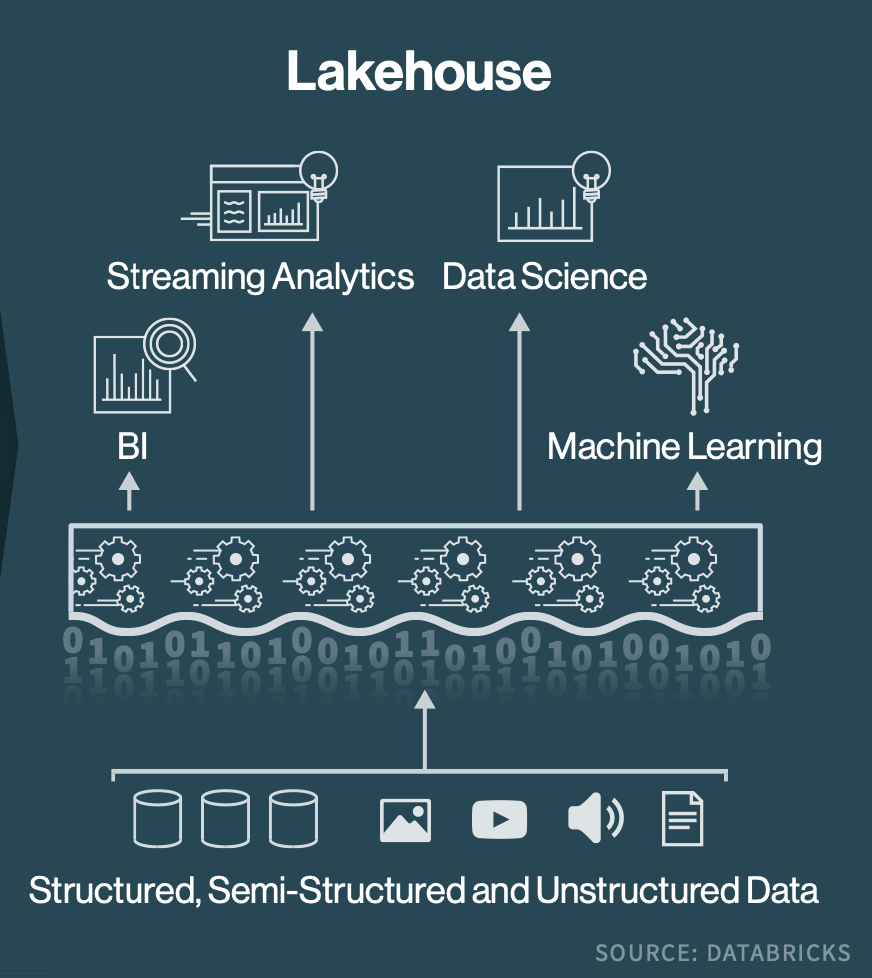

1 This is an important step that enables data engineering professionals to leverage the performance and scale of Databricks while benefiting from the ease and speed of development with Informatica Cloud Data Integration. Informatica Intelligent Data Management Cloud (IDMC) now offers cloud-native, no-code data integration that natively transforms data within Databricks SQL. Informatica recently announced advanced data integration capabilities that make it easier for organizations to quickly share data using Informatica and Databricks. Databricks Lakehouse is centered around a technology named Delta Lake, an open source project managed by the Linux Foundation.

The Party at the Lakehouse Just Got a Whole Lot Bigger!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed